Unity Catalog Create Table

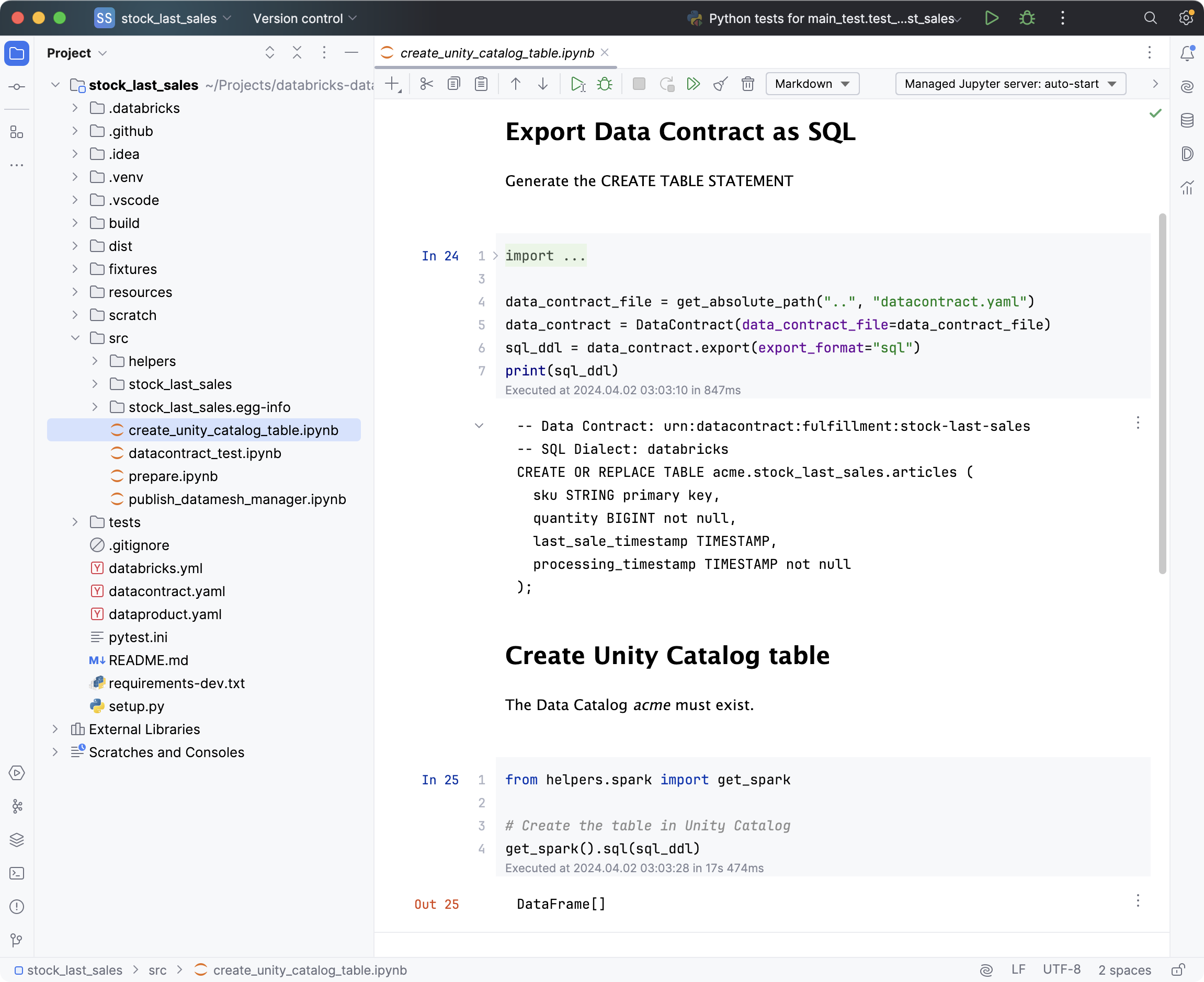

Unity Catalog Create Table - To create a table storage format table such as parquet, orc, avro, csv, json, or text, use the bin/uc table create. In the following examples, replace the placeholder values:. In this example, you’ll run a notebook that creates a table named department in the workspace catalog and default schema (database). Use materialized views in databricks sql. Update power bi when your data updates: Since its launch several years ago unity catalog has. For apache spark and delta lake to work together with unity catalog, you will need atleast apache spark 3.5.3 and delta lake 3.2.1. To create a new schema in the catalog, you must have the create schema privilege on the catalog. When you create a catalog, two schemas (databases). The following steps are required to download and. Publish datasets from unity catalog to power bi directly from data pipelines. To create a catalog, you can use catalog explorer, a sql command, the rest api, the databricks cli, or terraform. Contribute to unitycatalog/unitycatalog development by creating an account on github. For apache spark and delta lake to work together with unity catalog, you will need atleast apache spark 3.5.3 and delta lake 3.2.1. Unity catalog managed tables are the default when you create tables in databricks. Significantly reduce refresh costs by. The full name of the table, which is a concatenation of the. In the following examples, replace the placeholder values:. To create a new schema in the catalog, you must have the create schema privilege on the catalog. When you create a catalog, two schemas (databases). The full name of the table, which is a concatenation of the. Unity catalog makes it easy for multiple users to collaborate on the same data assets. Contribute to unitycatalog/unitycatalog development by creating an account on github. Significantly reduce refresh costs by. Unity catalog lets you create managed tables and external tables. In the following examples, replace the placeholder values:. This article describes how to create and refresh materialized views in databricks sql to improve performance and reduce the cost of. When you create a catalog, two schemas (databases). Publish datasets from unity catalog to power bi directly from data pipelines. Since its launch several years ago unity catalog has. See work with managed tables. For apache spark and delta lake to work together with unity catalog, you will need atleast apache spark 3.5.3 and delta lake 3.2.1. Unity catalog lets you create managed tables and external tables. To create a catalog, you can use catalog explorer, a sql command, the rest api, the databricks cli, or terraform. They always. Sharing the unity catalog across azure databricks environments. Update power bi when your data updates: Unity catalog makes it easy for multiple users to collaborate on the same data assets. Use materialized views in databricks sql. Unity catalog lets you create managed tables and external tables. The full name of the table, which is a concatenation of the. This command has multiple parameters: The cli tool allows users to interact with a unity catalog server to create and manage catalogs, schemas, tables across different formats, volumes with unstructured data, functions, ml and. Use materialized views in databricks sql. Unity catalog (uc) is the foundation for all. Suppose you need to work together on a parquet table with an external client. Since its launch several years ago unity catalog has. Use materialized views in databricks sql. For apache spark and delta lake to work together with unity catalog, you will need atleast apache spark 3.5.3 and delta lake 3.2.1. To create a catalog, you can use catalog. You can use an existing delta table in unity catalog that includes a. Create catalog and managed table. In this example, you’ll run a notebook that creates a table named department in the workspace catalog and default schema (database). Update power bi when your data updates: Unity catalog managed tables are the default when you create tables in databricks. They always use delta lake. The following creates a new table in. To create a catalog, you can use catalog explorer, a sql command, the rest api, the databricks cli, or terraform. Significantly reduce refresh costs by. Contribute to unitycatalog/unitycatalog development by creating an account on github. The following creates a new table in. See work with managed tables. Use the bin/uc table create. Command to create a new delta table in your unity catalog. Create catalog and managed table. In the following examples, replace the placeholder values:. Unity catalog managed tables are the default when you create tables in databricks. You create a copy of the. This article describes how to create and refresh materialized views in databricks sql to improve performance and reduce the cost of. The following creates a new table in. To create a table storage format table such as parquet, orc, avro, csv, json, or text, use the bin/uc table create. Update power bi when your data updates: You can use an existing delta table in unity catalog that includes a. Significantly reduce refresh costs by. Unity catalog managed tables are the default when you create tables in databricks. In this example, you’ll run a notebook that creates a table named department in the workspace catalog and default schema (database). Unity catalog makes it easy for multiple users to collaborate on the same data assets. Use one of the following command examples in a notebook or the sql query editor to create an external table. Publish datasets from unity catalog to power bi directly from data pipelines. Use the bin/uc table create. Contribute to unitycatalog/unitycatalog development by creating an account on github. For apache spark and delta lake to work together with unity catalog, you will need atleast apache spark 3.5.3 and delta lake 3.2.1. Unity catalog (uc) is the foundation for all governance and management of data objects in databricks data intelligence platform. The full name of the table, which is a concatenation of the. You create a copy of the. When you create a catalog, two schemas (databases).How to Read Unity Catalog Tables in Snowflake, in 3 Easy Steps

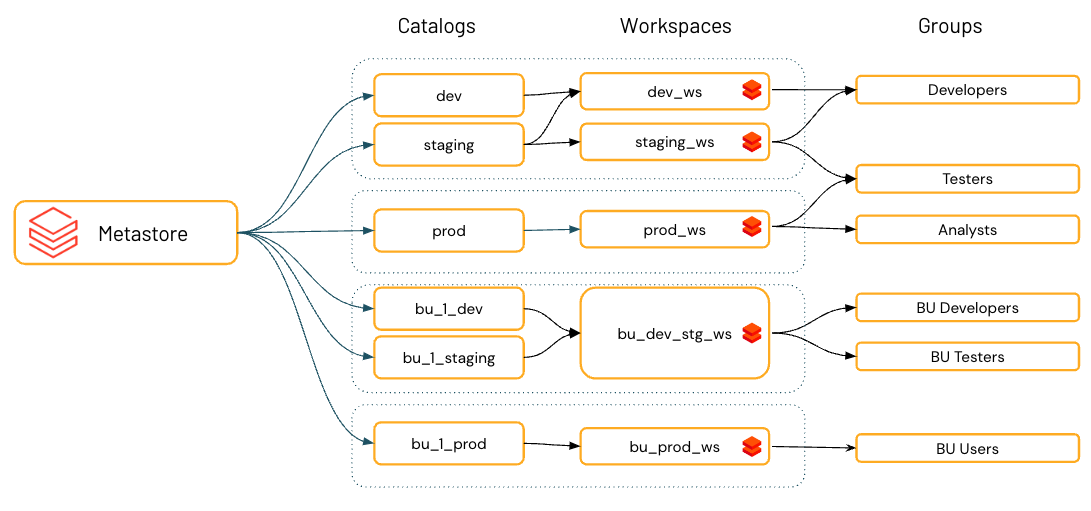

Demystifying Azure Databricks Unity Catalog Beyond the Horizon...

Unity Catalog best practices Azure Databricks Microsoft Learn

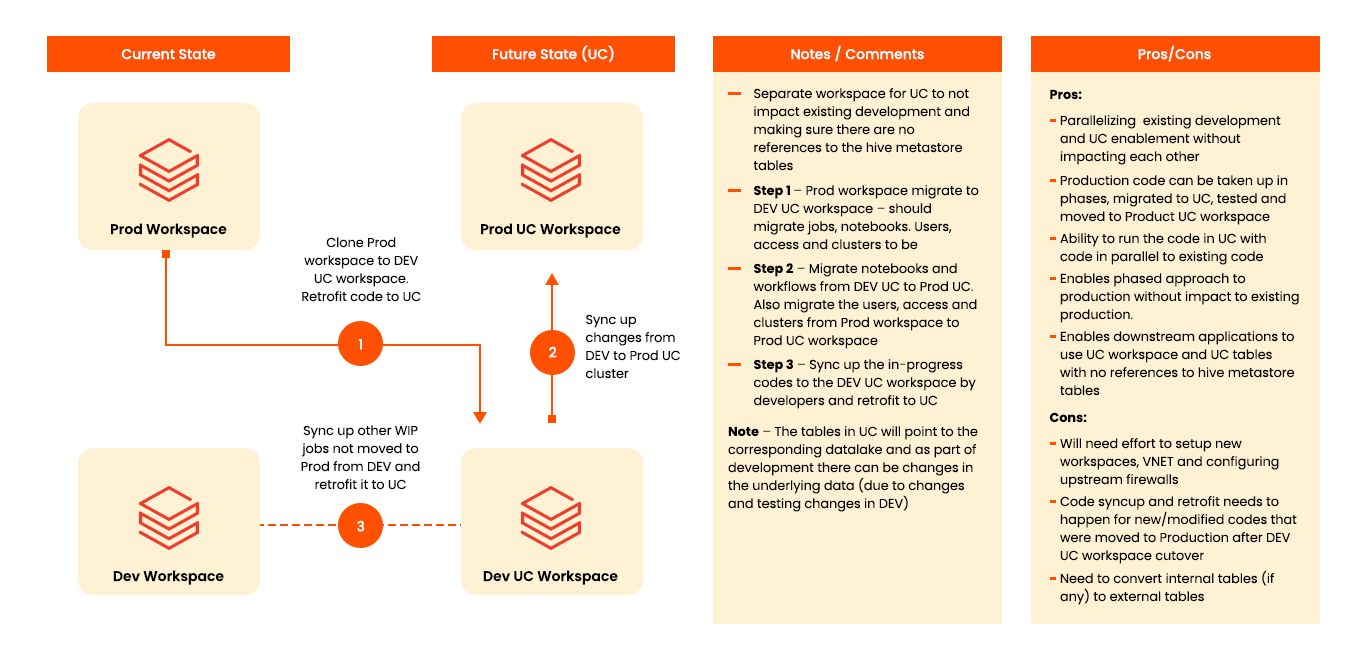

Unity Catalog Migration A Comprehensive Guide

Step by step guide to setup Unity Catalog in Azure La data avec Youssef

Upgrade Hive Metastore to Unity Catalog Databricks Blog

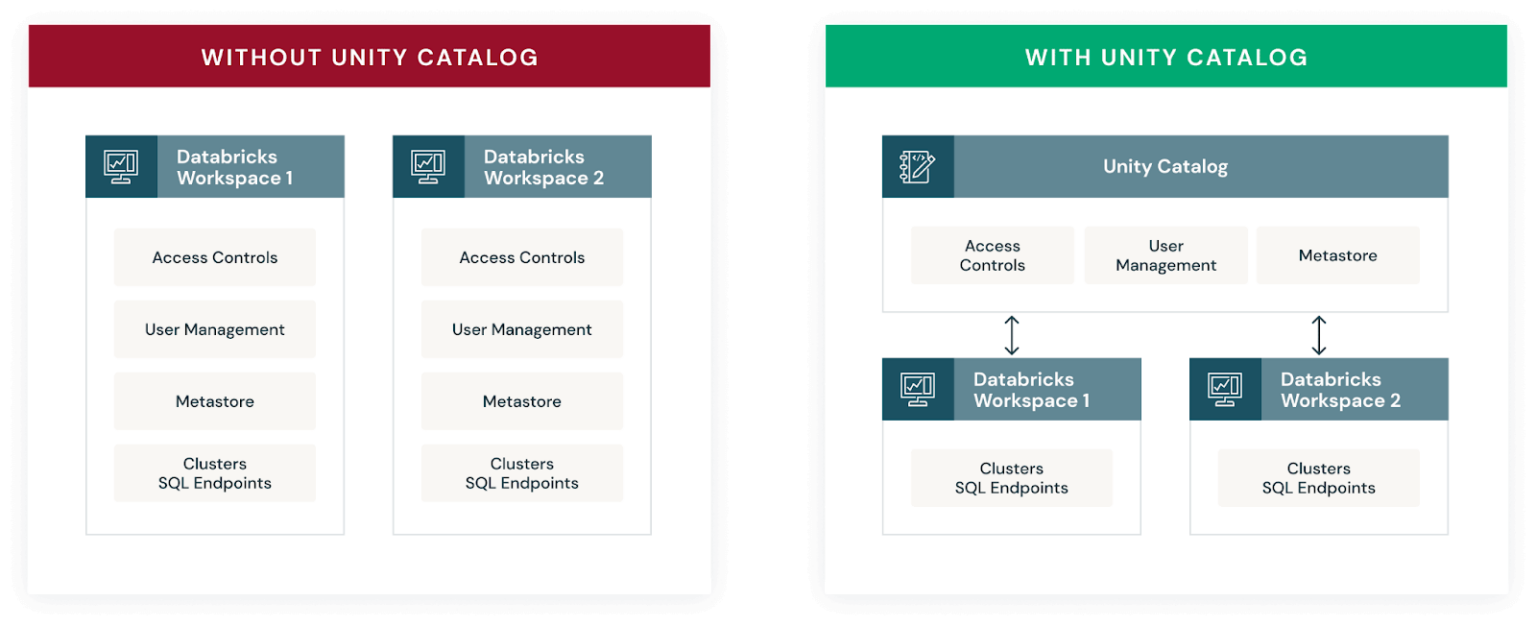

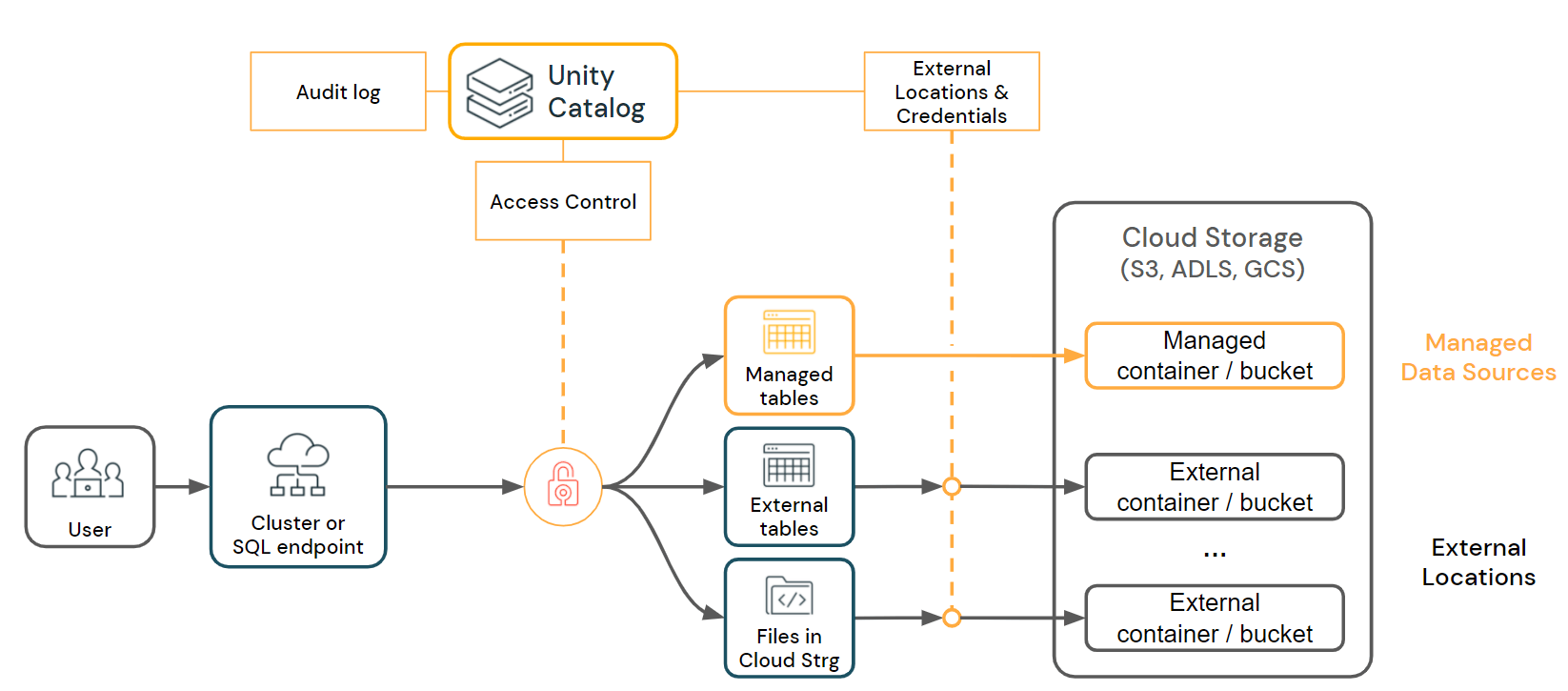

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

Build a Data Product with Databricks

Ducklake A journey to integrate DuckDB with Unity Catalog Xebia

Introducing Unity Catalog A Unified Governance Solution for Lakehouse

Use Materialized Views In Databricks Sql.

Sharing The Unity Catalog Across Azure Databricks Environments.

Since Its Launch Several Years Ago Unity Catalog Has.

Create Catalog And Managed Table.

Related Post: